Overview

In my thirties, I was part of the change generation. I took on civil rights, women’s rights, and gay rights. I had two children and was divorced. At first I didn’t stop to wonder what is driving all these changes? Was I simply taking part in societal upheaval?

Looking around I noticed, most all my friends were divorcing. This could not be individual choices. An entire society had altered their values and ways of living. I had this insight; it was social change and not just individuals who drove this change. individuals may not see or comprehend the social pressure therefore they are unduly influenced.

In trying to understand the breakup of so many nuclear families, I turned to Bowen’s family system ideas. This led me to an understanding of how families function and how anxiety is played out in relationships. I thought that because of such tremendous change in our generation, our children would live in generation of less sweeping social change.

Now I am again seeing sweeping social change and consider that it may be our grandchildren, who are rapidly changing and forcing us to see and adapt to the next big societal change.

Yes, there was an increase in divorce and people learned to adapt to a variety of relationships. Forty years later, my family is a tribe with loose connections. There have been multiple marriages and partnerships. The eight grandchildren and three step-grandchildren have a personal awareness of the extended family, the triangles, and emotional cutoffs, and to some extent what to do about them. All of us still have the challenge of relating well to the strangers. Some of us use systems knowledge as best we can to rise above side-taking and scapegoating. But I have never lost my interest in understanding social change and imagining the possible future.

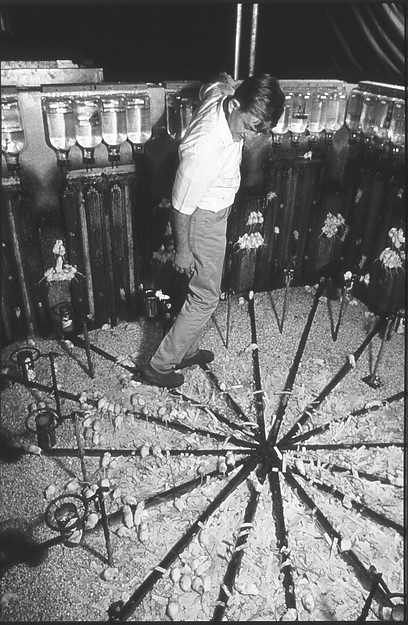

One researcher, Jack B. Calhoun, tried to predict the possible future for humans by studying colonies of mice. If mice lived in a nice clean environment with plenty of food and water, what would happen when physical space was limited? How would the social structure change?

In the ‘90s I asked him to read my paper called “The Biological Need to Keep your Ex-in Laws.” The thesis was that the family was automatically reshaping itself to adapt to changing conditions, most specifically an increase in world-wide population. One issue facing us would be that a majority of woman would be divorced from reproduction. This would enable the populations to decrease.

Calhoun noted that humans would have to be far more cooperative and tribal to withstand the pressures of the changing social makeup. He predicted that by the year (2024) we would use a computer like extension of the brain to make better decisions in the face of the increased population.

Anticipated changes as result of the increase in numbers

As we have moved from 2 billion to 7 billion people over the last hundred years, the family unit has undergone significant changes. There were four children in the average family. Now there are two children. There has been an increase in single parent families[i] and often a decrease in the number of relationships available to an individual family member.

Changing demographics require adaptations. Over-population in Jack Calhoun’s mouse research led to a “behavioral sink”; the distressed population lost the ability to maintain relationships and reproduce. Calhoun, in his custom-built mice universes encouraged new behaviors, noting that successful animals could cooperate when forced to do so to obtain water. In addition, the more competent animals had twelve important relationships, to balance frustration and gratification with the increase in population.[ii]

Hopefully despite the population pressures, the evolutionary processes continue to operate that shape social animals to care about the group they are a part of now and into the future.

As a grandparent, I may not be alive to see the future but I want to talk with my grandchildren about it. Clearly, they too are interested in spending some amount of their life energy challenging grandparents to keep up their health and well-being and their thinking.

For example, Andrew, my oldest grandson majored in computer science. Now I find myself reading books like Algorithms to Live By: The Computer Science of Human Decisions by Brian Christian and Tom Griffiths or Nik Bostrom’s Superintelligence: Paths Dangers, Strategies. Andrew’s interests are promoting my learning in thinking about the super amazing ways we might adapt using Artificial Intelligence (AI).

I fell into the world of AI wondering just how will a future super intelligence have values that enhance human relationships and life? Over Christmas, Andrew gave his younger brother, Patrick, the book, I, Robot. As a youngster, I loved I, Robot and the three laws of robotics were clear values that regulated caring about others:

1) A robot may not injure a human being or 2) through inaction, allow a human being to come to harm. 3) A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.[iii]

I am still intrigued and enamored of robots. After all, robots are the optimal knowledge machine, solving problems like cancer or global warming without having to rely on the fascination for the irrational thinking and superstitions of charismatic leaders. Robots love facts and doing stuff. They do not take sides or need power. They should be carefully programmed.

A superintelligence learning machine has its own reason to complete a task. They want answers, and they are immune from relationship pressures and anxiety.

Elon Musk and Ray Kurzweil (two of my new human heroes) think the only way for humans to survive is to fuse with the machines. They are us and we are them. The bots enter our blood stream, connecting us to a neural network based in the cloud. By the year 2030 our survival will be intertwined with that of the robots.

My grandson Patrick will be 28 by then, and like most of us, probably will not yet have absorbed all of Wikipedia, unlike Watson, the IBM computer, who already has.

Watson will be twice as smart next year, and then in another six months it will have another doubling of information, and the doubling continues exponentially in time. If these machines can solve the riddle of cancer or global warming, is it possible that they might be put to the task of understanding our ancient emotional system?

Robots could be curious and learn about emotional reactivity in relationships. Robots could be programmed to observe and ask questions to the human. What are the short and long consequences of these actions? Have you considered these alternatives? The percentage chance of this decision working out is “X”.

The robot might have to give us ideas even if we do not want them. Like, If you play one more game of golf your wife might… Robots might even know if we are harming others through inaction. To do no harm requires a highly-developed level of awareness and the ability to begin to predict the future.

Pedro Domingos, an expert in machine learning, sees experience as the great teacher, even for machines.[iv] A bot in our blood stream would learn a lot. They could sense increasing threats or stress and give the human a rational warning in emotional language: Wow! You are getting over-involved. Think, think, think for a minute, what else might you do? Here are three quick suggestions to mull over.

Social pressure is not a problem for bots. Your friendly family bot could calculate the automatic nature of avoidance, the automatic anxiety absorber, the “other focus”, blame and guilt, or how one can innocently wander into an emotional divorce. Our bot can remind us that 82% of the time this or that behavior leads to one becoming a hapless victim of the system. Bots have nothing to gain by going along with scapegoating. They would have no fear of losing self in a love affair.

The bot would be free to give feedback about how to achieve cooperation with others. They would be immune to rejection or name calling or even a loss in social status due to a physical illness. Robots do not need money or power to get along with the human. This value, aiding human productivity, will have to be a part of the way the robot is constructed. People are already working on this.

Only time will tell if a machine built to learn, from experiences with humans, will value family systems thinking and emotional maturity. Perhaps the super intelligent robot will see humans as savages and leave us to be regulated by the law of the jungle. If this is not our path, then we will have to be able to be better define in relationship to each other and the robots.

Emotional maturity by the programmers may ask the superintelligence, the robots, to solve problems a little bit better than we can. For example, what are the problems that can lead to humans having successors?

Perhaps then we can ask more significant questions. Perhaps we can even let the AI figure out its own way with just a slight nudge in values to go with it. The AI might be given “the motivation to achieve that which we would have wished the AI to achieve if we had thought about the matter long and hard.”[v]

Until the arrival of the superintelligence, humans will continue to form social groups, competing and forming hierarchies as we and the mice have done for millennia. But perhaps one day, differences will not loom as large. The focus will be on adapting to and solving complex problems, as problems we know will always be shaping our future.

Ideas for further reflection

Michael Schrage is a research fellow at the MIT Initiative on the Digital Economy (IDE) and the MIT Sloan School of Management, and author of The Innovator’s Hypothesis: How Cheap Experiments Are Worth More Than Good Ideas. He notes that:

[…]workers will get greater returns investing in “selves improvement”— “selves” that are smarter, bolder, more creative, more persuasive and/or more empathic than one’s “typical” or “average” self.

“Selvesware” delivers actionable, data-driven insights and advice on what to say, when to speak up and with whom to work, and suggests options to create, communicate and collaborate. It invites workers to digitally amplify their best attributes, while monitoring and minimizing their workplace weakness. In this future, the AI revolution is less about “artificial intelligence” and more about “augmenting introspection.”[i]

Buckminster Fuller created the “Knowledge Doubling Curve”; he noticed that until 1900 human knowledge doubled approximately every century. By the end of World War II knowledge was doubling every 25 years. Today, things are not as simple as different types of knowledge have different rates of growth. For example, nanotechnology knowledge is doubling every two years and clinical knowledge every 18 months. But on average, human knowledge is doubling every 13 months. According to IBM, the build out of the “internet of things” will lead to the doubling of knowledge every 12 hours.[vi]

Isaac Asimov

Now humans caught in an impossibility often respond by a retreat from reality: by entry into a world of delusion, or by taking to drink, going off into hysteria or jumping off a bridge. It all comes to the same thing – a refusal or inability to face the situation squarely away and so too the robot.

Nick Bostrom wrote in Superintelligence: Paths Dangers, Strategies –

AI can be less human in its motivation than a green scaly space alien. The extraterrestrial (we will assume) has arisen through evolutionary processes and therefore can be expected to have the same kind of motivations typical of evolved creatures…. A member of an intelligent social species might have motivations related to air, food, water, survival, recognition of threat, disease, sex, progenies, group loyalty, resentment of free riders, perhaps even have concerns with reputation and appearance. An AI by contrast may not value any of these things. Page 127

The final goal, ask that AI achieve that which we would have wished the AI to achieve if we had thought about the matter long and hard. Page 173

Videos and Readings of Interest

- Elon Musk – AI Advancement

- Google’s Deep Mind Explained! – Self Learning

- Sam Harris – Scared of superintelligent AI?

- Nick Bostrom – What Happens When Our Computers Get Smarter than We Are?

- Pedro Domingos – The Quest for the Master Algorithm

- Ray Kurzweil – How to Create a Mind

- Richard Dawkins – Red in Tooth and Claw

Murray Bowen – Family Therapy in Clinical Practice (p. 22)

Footnotes:

[i] http://www.pewsocialtrends.org/2015/12/17/1-the-american-family-today/

[ii] https://www.washingtonpost.com/news/retropolis/wp/2017/06/19/the-researcher-who-loved-rats-and-fueled-our-doomsday-fears/?utm_term=.8fd14940fd72

[iii] https://en.wikipedia.org/wiki/Three_Laws_of_Robotics

[iv] Pedro Domingos, 2015, Basic Books, The Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our World

[v] Nick Bostrom, Superintelligence: Paths Dangers, Strategies (page 173)

[vi] https://techcrunch.com/2017/06/21/how-technology-enabled-selves-improvement-will-drive-the-future-of-personal-productivity/

Andrea Maloney Schara

https://ideastoaction.wordpress.com/

Mailing address:

4122 Cheseapeak St. NW

Washington, DC 20016

cell 203-274-1069

Faculty

http://navigatingsystemsdc.com/

Your Mindful Compass: Breakthrough Strategies For Navigating Life/Work Relationships In Any Social Jungle available on Amazon

You must be logged in to post a comment.